id int64 599M 3.48B | number int64 1 7.8k | title stringlengths 1 290 | state stringclasses 2

values | comments listlengths 0 30 | created_at timestamp[s]date 2020-04-14 10:18:02 2025-10-05 06:37:50 | updated_at timestamp[s]date 2020-04-27 16:04:17 2025-10-05 10:32:43 | closed_at timestamp[s]date 2020-04-14 12:01:40 2025-10-01 13:56:03 ⌀ | body stringlengths 0 228k ⌀ | user stringlengths 3 26 | html_url stringlengths 46 51 | pull_request dict | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,147,898,946 | 3,778 | Not be able to download dataset - "Newsroom" | closed | [

"Hi @Darshan2104, thanks for reporting.\r\n\r\nPlease note that at Hugging Face we do not host the data of this dataset, but just a loading script pointing to the host of the data owners.\r\n\r\nApparently the data owners changed their data host server. After googling it, I found their new website at: https://lil.n... | 2022-02-23T10:15:50 | 2022-02-23T17:05:04 | 2022-02-23T13:26:40 | Hello,

I tried to download the **newsroom** dataset but it didn't work out for me. it said me to **download it manually**!

For manually, Link is also didn't work! It is sawing some ad or something!

If anybody has solved this issue please help me out or if somebody has this dataset please share your google driv... | Darshan2104 | https://github.com/huggingface/datasets/issues/3778 | null | false |

1,147,232,875 | 3,777 | Start removing canonical datasets logic | closed | [

"I'm not sure if the documentation explains why the dataset identifiers might have a namespace or not (the user/org): 'glue' vs 'severo/glue'. Do you think we should explain it, and relate it to the GitHub/Hub distinction?",

"> I'm not sure if the documentation explains why the dataset identifiers might have a na... | 2022-02-22T18:23:30 | 2022-02-24T15:04:37 | 2022-02-24T15:04:36 | I updated the source code and the documentation to start removing the "canonical datasets" logic.

Indeed this makes the documentation confusing and we don't want this distinction anymore in the future. Ideally users should share their datasets on the Hub directly.

### Changes

- the documentation about dataset ... | lhoestq | https://github.com/huggingface/datasets/pull/3777 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3777",

"html_url": "https://github.com/huggingface/datasets/pull/3777",

"diff_url": "https://github.com/huggingface/datasets/pull/3777.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3777.patch",

"merged_at": "2022-02-24T15:04... | true |

1,146,932,871 | 3,776 | Allow download only some files from the Wikipedia dataset | open | [

"Hi @jvanz, thank you for your proposal.\r\n\r\nIn fact, we are aware that it is very common the problem you mention. Because of that, we are currently working in implementing a new version of wikipedia on the Hub, with all data preprocessed (no need to use Apache Beam), from where you will be able to use `data_fil... | 2022-02-22T13:46:41 | 2022-02-22T14:50:02 | null | **Is your feature request related to a problem? Please describe.**

The Wikipedia dataset can be really big. This is a problem if you want to use it locally in a laptop with the Apache Beam `DirectRunner`. Even if your laptop have a considerable amount of memory (e.g. 32gb).

**Describe the solution you'd like**

I... | jvanz | https://github.com/huggingface/datasets/issues/3776 | null | false |

1,146,849,454 | 3,775 | Update gigaword card and info | closed | [

"I think it actually comes from an issue here:\r\n\r\nhttps://github.com/huggingface/datasets/blob/810b12f763f5cf02f2e43565b8890d278b7398cd/src/datasets/utils/file_utils.py#L575-L579\r\n\r\nand \r\n\r\nhttps://github.com/huggingface/datasets/blob/810b12f763f5cf02f2e43565b8890d278b7398cd/src/datasets/utils/streaming... | 2022-02-22T12:27:16 | 2022-02-28T11:35:24 | 2022-02-28T11:35:24 | Reported on the forum: https://discuss.huggingface.co/t/error-loading-dataset/14999 | mariosasko | https://github.com/huggingface/datasets/pull/3775 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3775",

"html_url": "https://github.com/huggingface/datasets/pull/3775",

"diff_url": "https://github.com/huggingface/datasets/pull/3775.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3775.patch",

"merged_at": "2022-02-28T11:35... | true |

1,146,843,177 | 3,774 | Fix reddit_tifu data URL | closed | [] | 2022-02-22T12:21:15 | 2022-02-22T12:38:45 | 2022-02-22T12:38:44 | Fix #3773. | albertvillanova | https://github.com/huggingface/datasets/pull/3774 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3774",

"html_url": "https://github.com/huggingface/datasets/pull/3774",

"diff_url": "https://github.com/huggingface/datasets/pull/3774.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3774.patch",

"merged_at": "2022-02-22T12:38... | true |

1,146,758,335 | 3,773 | Checksum mismatch for the reddit_tifu dataset | closed | [

"Thanks for reporting, @anna-kay. We are fixing it.",

"@albertvillanova Thank you for the fast response! However I am still getting the same error:\r\n\r\nDownloading: 2.23kB [00:00, ?B/s]\r\nTraceback (most recent call last):\r\n File \"C:\\Users\\Anna\\PycharmProjects\\summarization\\main.py\", line 17, in <mo... | 2022-02-22T10:57:07 | 2022-02-25T19:27:49 | 2022-02-22T12:38:44 | ## Describe the bug

A checksum occurs when downloading the reddit_tifu data (both long & short).

## Steps to reproduce the bug

reddit_tifu_dataset = load_dataset('reddit_tifu', 'long')

## Expected results

The expected result is for the dataset to be downloaded and cached locally.

## Actual results

File "... | anna-kay | https://github.com/huggingface/datasets/issues/3773 | null | false |

1,146,718,630 | 3,772 | Fix: dataset name is stored in keys | closed | [] | 2022-02-22T10:20:37 | 2022-02-22T11:08:34 | 2022-02-22T11:08:33 | null | thomasw21 | https://github.com/huggingface/datasets/pull/3772 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3772",

"html_url": "https://github.com/huggingface/datasets/pull/3772",

"diff_url": "https://github.com/huggingface/datasets/pull/3772.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3772.patch",

"merged_at": "2022-02-22T11:08... | true |

1,146,561,140 | 3,771 | Fix DuplicatedKeysError on msr_sqa dataset | closed | [] | 2022-02-22T07:44:24 | 2022-02-22T08:12:40 | 2022-02-22T08:12:39 | Fix #3770. | albertvillanova | https://github.com/huggingface/datasets/pull/3771 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3771",

"html_url": "https://github.com/huggingface/datasets/pull/3771",

"diff_url": "https://github.com/huggingface/datasets/pull/3771.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3771.patch",

"merged_at": "2022-02-22T08:12... | true |

1,146,336,667 | 3,770 | DuplicatedKeysError on msr_sqa dataset | closed | [

"Thanks for reporting, @kolk.\r\n\r\nWe are fixing it. "

] | 2022-02-22T00:43:33 | 2022-02-22T08:12:39 | 2022-02-22T08:12:39 | ### Describe the bug

Failure to generate dataset msr_sqa because of duplicate keys.

### Steps to reproduce the bug

```

from datasets import load_dataset

load_dataset("msr_sqa")

```

### Expected results

The examples keys should be unique.

**Actual results**

```

>>> load_dataset("msr_sqa")

Downloading:

6... | kolk | https://github.com/huggingface/datasets/issues/3770 | null | false |

1,146,258,023 | 3,769 | `dataset = dataset.map()` causes faiss index lost | open | [

"Hi ! Indeed `map` is dropping the index right now, because one can create a dataset with more or fewer rows using `map` (and therefore the index might not be relevant anymore)\r\n\r\nI guess we could check the resulting dataset length, and if the user hasn't changed the dataset size we could keep the index, what d... | 2022-02-21T21:59:23 | 2022-06-27T14:56:29 | null | ## Describe the bug

assigning the resulted dataset to original dataset causes lost of the faiss index

## Steps to reproduce the bug

`my_dataset` is a regular loaded dataset. It's a part of a customed dataset structure

```python

self.dataset.add_faiss_index('embeddings')

self.dataset.list_indexes()

# ['embeddin... | Oaklight | https://github.com/huggingface/datasets/issues/3769 | null | false |

1,146,102,442 | 3,768 | Fix HfFileSystem docstring | closed | [] | 2022-02-21T18:14:40 | 2022-02-22T09:13:03 | 2022-02-22T09:13:02 | null | lhoestq | https://github.com/huggingface/datasets/pull/3768 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3768",

"html_url": "https://github.com/huggingface/datasets/pull/3768",

"diff_url": "https://github.com/huggingface/datasets/pull/3768.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3768.patch",

"merged_at": "2022-02-22T09:13... | true |

1,146,036,648 | 3,767 | Expose method and fix param | closed | [] | 2022-02-21T16:57:47 | 2022-02-22T08:35:03 | 2022-02-22T08:35:02 | A fix + expose a new method, following https://github.com/huggingface/datasets/pull/3670 | severo | https://github.com/huggingface/datasets/pull/3767 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3767",

"html_url": "https://github.com/huggingface/datasets/pull/3767",

"diff_url": "https://github.com/huggingface/datasets/pull/3767.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3767.patch",

"merged_at": "2022-02-22T08:35... | true |

1,145,829,289 | 3,766 | Fix head_qa data URL | closed | [] | 2022-02-21T13:52:50 | 2022-02-21T14:39:20 | 2022-02-21T14:39:19 | Fix #3758. | albertvillanova | https://github.com/huggingface/datasets/pull/3766 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3766",

"html_url": "https://github.com/huggingface/datasets/pull/3766",

"diff_url": "https://github.com/huggingface/datasets/pull/3766.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3766.patch",

"merged_at": "2022-02-21T14:39... | true |

1,145,126,881 | 3,765 | Update URL for tagging app | closed | [

"Oh, this URL shouldn't be updated to the tagging app as it's actually used for creating the README - closing this."

] | 2022-02-20T20:34:31 | 2022-02-20T20:36:10 | 2022-02-20T20:36:06 | This PR updates the URL for the tagging app to be the one on Spaces. | lewtun | https://github.com/huggingface/datasets/pull/3765 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3765",

"html_url": "https://github.com/huggingface/datasets/pull/3765",

"diff_url": "https://github.com/huggingface/datasets/pull/3765.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3765.patch",

"merged_at": null

} | true |

1,145,107,050 | 3,764 | ! | closed | [] | 2022-02-20T19:05:43 | 2022-02-21T08:55:58 | 2022-02-21T08:55:58 | ## Dataset viewer issue for '*name of the dataset*'

**Link:** *link to the dataset viewer page*

*short description of the issue*

Am I the one who added this dataset ? Yes-No

| LesiaFedorenko | https://github.com/huggingface/datasets/issues/3764 | null | false |

1,145,099,878 | 3,763 | It's not possible download `20200501.pt` dataset | closed | [

"Hi @jvanz, thanks for reporting.\r\n\r\nPlease note that Wikimedia website does not longer host Wikipedia dumps for so old dates.\r\n\r\nFor a list of accessible dump dates of `pt` Wikipedia, please see: https://dumps.wikimedia.org/ptwiki/\r\n\r\nYou can load for example `20220220` `pt` Wikipedia:\r\n```python\r\n... | 2022-02-20T18:34:58 | 2022-02-21T12:06:12 | 2022-02-21T09:25:06 | ## Describe the bug

The dataset `20200501.pt` is broken.

The available datasets: https://dumps.wikimedia.org/ptwiki/

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("wikipedia", "20200501.pt", beam_runner='DirectRunner')

```

## Expected results

I expect t... | jvanz | https://github.com/huggingface/datasets/issues/3763 | null | false |

1,144,849,557 | 3,762 | `Dataset.class_encode` should support custom class names | closed | [

"Hi @Dref360, thanks a lot for your proposal.\r\n\r\nIt totally makes sense to have more flexibility when class encoding, I agree.\r\n\r\nYou could even further customize the class encoding by passing an instance of `ClassLabel` itself (instead of replicating `ClassLabel` instantiation arguments as `Dataset.class_e... | 2022-02-19T21:21:45 | 2022-02-21T12:16:35 | 2022-02-21T12:16:35 | I can make a PR, just wanted approval before starting.

**Is your feature request related to a problem? Please describe.**

It is often the case that classes are not ordered in alphabetical order. Current `class_encode_column` sort the classes before indexing.

https://github.com/huggingface/datasets/blob/master/sr... | Dref360 | https://github.com/huggingface/datasets/issues/3762 | null | false |

1,144,830,702 | 3,761 | Know your data for HF hub | closed | [

"Hi @Muhtasham you should take a look at https://huggingface.co/blog/data-measurements-tool and accompanying demo app at https://huggingface.co/spaces/huggingface/data-measurements-tool\r\n\r\nWe would be interested in your feedback. cc @meg-huggingface @sashavor @yjernite "

] | 2022-02-19T19:48:47 | 2022-02-21T14:15:23 | 2022-02-21T14:15:23 | **Is your feature request related to a problem? Please describe.**

Would be great to see be able to understand datasets with the goal of improving data quality, and helping mitigate fairness and bias issues.

**Describe the solution you'd like**

Something like https://knowyourdata.withgoogle.com/ for HF hub | Muhtasham | https://github.com/huggingface/datasets/issues/3761 | null | false |

1,144,804,558 | 3,760 | Unable to view the Gradio flagged call back dataset | closed | [

"Hi @kingabzpro.\r\n\r\nI think you need to create a loading script that creates the dataset from the CSV file and the image paths.\r\n\r\nAs example, you could have a look at the Food-101 dataset: https://huggingface.co/datasets/food101\r\n- Loading script: https://huggingface.co/datasets/food101/blob/main/food101... | 2022-02-19T17:45:08 | 2022-03-22T07:12:11 | 2022-03-22T07:12:11 | ## Dataset viewer issue for '*savtadepth-flags*'

**Link:** *[savtadepth-flags](https://huggingface.co/datasets/kingabzpro/savtadepth-flags)*

*with the Gradio 2.8.1 the dataset viers stopped working. I tried to add values manually but its not working. The dataset is also not showing the link with the app https://h... | kingabzpro | https://github.com/huggingface/datasets/issues/3760 | null | false |

1,143,400,770 | 3,759 | Rename GenerateMode to DownloadMode | closed | [

"Thanks! Used here: https://github.com/huggingface/datasets-preview-backend/blob/main/src/datasets_preview_backend/models/dataset.py#L26 :) "

] | 2022-02-18T16:53:53 | 2022-02-22T13:57:24 | 2022-02-22T12:22:52 | This PR:

- Renames `GenerateMode` to `DownloadMode`

- Implements `DeprecatedEnum`

- Deprecates `GenerateMode`

Close #769. | albertvillanova | https://github.com/huggingface/datasets/pull/3759 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3759",

"html_url": "https://github.com/huggingface/datasets/pull/3759",

"diff_url": "https://github.com/huggingface/datasets/pull/3759.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3759.patch",

"merged_at": "2022-02-22T12:22... | true |

1,143,366,393 | 3,758 | head_qa file missing | closed | [

"We usually find issues with files hosted at Google Drive...\r\n\r\nIn this case we download the Google Drive Virus scan warning instead of the data file.",

"Fixed: https://huggingface.co/datasets/head_qa/viewer/en/train. Thanks\r\n\r\n<img width=\"1551\" alt=\"Capture d’écran 2022-02-28 à 15 29 04\" src=\"http... | 2022-02-18T16:32:43 | 2022-02-28T14:29:18 | 2022-02-21T14:39:19 | ## Describe the bug

A file for the `head_qa` dataset is missing (https://drive.google.com/u/0/uc?export=download&id=1a_95N5zQQoUCq8IBNVZgziHbeM-QxG2t/HEAD_EN/train_HEAD_EN.json)

## Steps to reproduce the bug

```python

>>> from datasets import load_dataset

>>> load_dataset("head_qa", name="en")

```

## Expec... | severo | https://github.com/huggingface/datasets/issues/3758 | null | false |

1,143,300,880 | 3,757 | Add perplexity to metrics | closed | [

"Awesome thank you ! The implementation of the parent `Metric` class was assuming that all metrics were supposed to have references/predictions pairs - I just changed that so you don't have to override `compute()`. I took the liberty of doing the changes directly inside this PR to make sure it works as expected wit... | 2022-02-18T15:52:23 | 2022-02-25T17:13:34 | 2022-02-25T17:13:34 | Adding perplexity metric

This code differs from the code in [this](https://huggingface.co/docs/transformers/perplexity) HF blog post because the blogpost code fails in at least the following circumstances:

- returns nans whenever the stride = 1

- hits a runtime error when the stride is significantly larger than th... | emibaylor | https://github.com/huggingface/datasets/pull/3757 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3757",

"html_url": "https://github.com/huggingface/datasets/pull/3757",

"diff_url": "https://github.com/huggingface/datasets/pull/3757.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3757.patch",

"merged_at": "2022-02-25T17:13... | true |

1,143,273,825 | 3,756 | Images get decoded when using `map()` with `input_columns` argument on a dataset | closed | [

"Hi! If I'm not mistaken, this behavior is intentional, but I agree it could be more intuitive.\r\n\r\n@albertvillanova Do you remember why you decided not to decode columns in the `Audio` feature PR when `input_columns` is not `None`? IMO we should decode those columns, and we don't even have to use lazy structure... | 2022-02-18T15:35:38 | 2022-12-13T16:59:06 | 2022-12-13T16:59:06 | ## Describe the bug

The `datasets.features.Image` feature class decodes image data by default. Expectedly, when indexing a dataset or using the `map()` method, images are returned as PIL Image instances.

However, when calling `map()` and setting a specific data column with the `input_columns` argument, the image ... | kklemon | https://github.com/huggingface/datasets/issues/3756 | null | false |

1,143,032,961 | 3,755 | Cannot preview dataset | closed | [

"Thanks for reporting. The dataset viewer depends on some backend treatments, and for now, they might take some hours to get processed. We're working on improving it.",

"It has finally been processed. Thanks for the patience.",

"Thanks for the info @severo !"

] | 2022-02-18T13:06:45 | 2022-02-19T14:30:28 | 2022-02-18T15:41:33 | ## Dataset viewer issue for '*rubrix/news*'

**Link:https://huggingface.co/datasets/rubrix/news** *link to the dataset viewer page*

Cannot see the dataset preview:

```

Status code: 400

Exception: Status400Error

Message: Not found. Cache is waiting to be refreshed.

```

Am I the one who added thi... | frascuchon | https://github.com/huggingface/datasets/issues/3755 | null | false |

1,142,886,536 | 3,754 | Overflowing indices in `select` | closed | [

"Fixed on master (see https://github.com/huggingface/datasets/pull/3719).",

"Awesome, I did not find that one! Thanks."

] | 2022-02-18T11:30:52 | 2022-02-18T11:38:23 | 2022-02-18T11:38:23 | ## Describe the bug

The `Dataset.select` function seems to accept indices that are larger than the dataset size and seems to effectively use `index %len(ds)`.

## Steps to reproduce the bug

```python

from datasets import Dataset

ds = Dataset.from_dict({"test": [1,2,3]})

ds = ds.select(range(5))

print(ds)

p... | lvwerra | https://github.com/huggingface/datasets/issues/3754 | null | false |

1,142,821,144 | 3,753 | Expanding streaming capabilities | open | [

"Related to: https://github.com/huggingface/datasets/issues/3444",

"Cool ! `filter` will be very useful. There can be a filter that you can apply on a streaming dataset:\r\n```python\r\nload_dataset(..., streaming=True).filter(lambda x: x[\"lang\"] == \"sw\")\r\n```\r\n\r\nOtherwise if you want to apply a filter ... | 2022-02-18T10:45:41 | 2025-03-19T14:50:14 | null | Some ideas for a few features that could be useful when working with large datasets in streaming mode.

## `filter` for `IterableDataset`

Adding filtering to streaming datasets would be useful in several scenarios:

- filter a dataset with many languages for a subset of languages

- filter a dataset for specific li... | lvwerra | https://github.com/huggingface/datasets/issues/3753 | null | false |

1,142,627,889 | 3,752 | Update metadata JSON for cats_vs_dogs dataset | closed | [] | 2022-02-18T08:32:53 | 2022-02-18T14:56:12 | 2022-02-18T14:56:11 | Note that the number of examples in the train split was already fixed in the dataset card.

Fix #3750. | albertvillanova | https://github.com/huggingface/datasets/pull/3752 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3752",

"html_url": "https://github.com/huggingface/datasets/pull/3752",

"diff_url": "https://github.com/huggingface/datasets/pull/3752.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3752.patch",

"merged_at": "2022-02-18T14:56... | true |

1,142,609,327 | 3,751 | Fix typo in train split name | closed | [] | 2022-02-18T08:18:04 | 2022-02-18T14:28:52 | 2022-02-18T14:28:52 | In the README guide (and consequently in many datasets) there was a typo in the train split name:

```

| Tain | Valid | Test |

```

This PR:

- fixes the typo in the train split name

- fixes the column alignment of the split tables

in the README guide and in all datasets. | albertvillanova | https://github.com/huggingface/datasets/pull/3751 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3751",

"html_url": "https://github.com/huggingface/datasets/pull/3751",

"diff_url": "https://github.com/huggingface/datasets/pull/3751.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3751.patch",

"merged_at": "2022-02-18T14:28... | true |

1,142,408,331 | 3,750 | `NonMatchingSplitsSizesError` for cats_vs_dogs dataset | closed | [

"Thnaks for reporting @jaketae. We are fixing it. "

] | 2022-02-18T05:46:39 | 2022-02-18T14:56:11 | 2022-02-18T14:56:11 | ## Describe the bug

Cannot download cats_vs_dogs dataset due to `NonMatchingSplitsSizesError`.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("cats_vs_dogs")

```

## Expected results

Loading is successful.

## Actual results

```

NonMatchingSplitsSiz... | jaketae | https://github.com/huggingface/datasets/issues/3750 | null | false |

1,142,156,678 | 3,749 | Add tqdm arguments | closed | [

"Hi ! Thanks this will be very useful :)\r\n\r\nIt looks like there are some changes in the github diff that are not related to your contribution, can you try fixing this by merging `master` into your PR, or create a new PR from an updated version of `master` ?",

"I have already solved the conflict on this latest... | 2022-02-18T01:34:46 | 2022-03-08T09:38:48 | 2022-03-08T09:38:48 | In this PR, tqdm arguments can be passed to the map() function and such, in order to be more flexible. | penguinwang96825 | https://github.com/huggingface/datasets/pull/3749 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3749",

"html_url": "https://github.com/huggingface/datasets/pull/3749",

"diff_url": "https://github.com/huggingface/datasets/pull/3749.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3749.patch",

"merged_at": null

} | true |

1,142,128,763 | 3,748 | Add tqdm arguments | closed | [] | 2022-02-18T00:47:55 | 2022-02-18T00:59:15 | 2022-02-18T00:59:15 | In this PR, there are two changes.

1. It is able to show the progress bar by adding the length of the iterator.

2. Pass in tqdm_kwargs so that can enable more feasibility for the control of tqdm library. | penguinwang96825 | https://github.com/huggingface/datasets/pull/3748 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3748",

"html_url": "https://github.com/huggingface/datasets/pull/3748",

"diff_url": "https://github.com/huggingface/datasets/pull/3748.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3748.patch",

"merged_at": null

} | true |

1,141,688,854 | 3,747 | Passing invalid subset should throw an error | open | [] | 2022-02-17T18:16:11 | 2022-02-17T18:16:11 | null | ## Describe the bug

Only some datasets have a subset (as in `load_dataset(name, subset)`). If you pass an invalid subset, an error should be thrown.

## Steps to reproduce the bug

```python

import datasets

datasets.load_dataset('rotten_tomatoes', 'asdfasdfa')

```

## Expected results

This should break, since ... | jxmorris12 | https://github.com/huggingface/datasets/issues/3747 | null | false |

1,141,612,810 | 3,746 | Use the same seed to shuffle shards and metadata in streaming mode | closed | [] | 2022-02-17T17:06:31 | 2022-02-23T15:00:59 | 2022-02-23T15:00:58 | When shuffling in streaming mode, those two entangled lists are shuffled independently. In this PR I changed this to shuffle the lists of same length with the exact same seed, in order for the files and metadata to still be aligned.

```python

gen_kwargs = {

"files": [os.path.join(data_dir, filename) for filename... | lhoestq | https://github.com/huggingface/datasets/pull/3746 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3746",

"html_url": "https://github.com/huggingface/datasets/pull/3746",

"diff_url": "https://github.com/huggingface/datasets/pull/3746.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3746.patch",

"merged_at": "2022-02-23T15:00... | true |

1,141,520,953 | 3,745 | Add mIoU metric | closed | [

"Hmm the doctest failed again - maybe the full result needs to be on one single line",

"cc @lhoestq for the final review",

"Cool ! Feel free to merge if it's all good for you"

] | 2022-02-17T15:52:17 | 2022-03-08T13:20:26 | 2022-03-08T13:20:26 | This PR adds the mean Intersection-over-Union metric to the library, useful for tasks like semantic segmentation.

It is entirely based on mmseg's [implementation](https://github.com/open-mmlab/mmsegmentation/blob/master/mmseg/core/evaluation/metrics.py).

I've removed any PyTorch dependency, and rely on Numpy only... | NielsRogge | https://github.com/huggingface/datasets/pull/3745 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3745",

"html_url": "https://github.com/huggingface/datasets/pull/3745",

"diff_url": "https://github.com/huggingface/datasets/pull/3745.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3745.patch",

"merged_at": "2022-03-08T13:20... | true |

1,141,461,165 | 3,744 | Better shards shuffling in streaming mode | closed | [] | 2022-02-17T15:07:21 | 2022-02-23T15:00:58 | 2022-02-23T15:00:58 | Sometimes a dataset script has a `_split_generators` that returns several files as well as the corresponding metadata of each file. It often happens that they end up in two separate lists in the `gen_kwargs`:

```python

gen_kwargs = {

"files": [os.path.join(data_dir, filename) for filename in all_files],

"me... | lhoestq | https://github.com/huggingface/datasets/issues/3744 | null | false |

1,141,176,011 | 3,743 | initial monash time series forecasting repository | closed | [

"_The documentation is not available anymore as the PR was closed or merged._",

"The CI fails are unrelated to this PR, merging !",

"thanks 🙇🏽 "

] | 2022-02-17T10:51:31 | 2022-03-21T09:54:41 | 2022-03-21T09:50:16 | null | kashif | https://github.com/huggingface/datasets/pull/3743 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3743",

"html_url": "https://github.com/huggingface/datasets/pull/3743",

"diff_url": "https://github.com/huggingface/datasets/pull/3743.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3743.patch",

"merged_at": "2022-03-21T09:50... | true |

1,141,174,549 | 3,742 | Fix ValueError message formatting in int2str | closed | [] | 2022-02-17T10:50:08 | 2022-02-17T15:32:02 | 2022-02-17T15:32:02 | Hi!

I bumped into this particular `ValueError` during my work (because an instance of `np.int64` was passed instead of regular Python `int`), and so I had to `print(type(values))` myself. Apparently, it's just the missing `f` to make message an f-string.

It ain't much for a contribution, but it's honest work. Hop... | aaakulchyk | https://github.com/huggingface/datasets/pull/3742 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3742",

"html_url": "https://github.com/huggingface/datasets/pull/3742",

"diff_url": "https://github.com/huggingface/datasets/pull/3742.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3742.patch",

"merged_at": "2022-02-17T15:32... | true |

1,141,132,649 | 3,741 | Rm sphinx doc | closed | [] | 2022-02-17T10:11:37 | 2022-02-17T10:15:17 | 2022-02-17T10:15:12 | Checklist

- [x] Update circle ci yaml

- [x] Delete sphinx static & python files in docs dir

- [x] Update readme in docs dir

- [ ] Update docs config in setup.py | mishig25 | https://github.com/huggingface/datasets/pull/3741 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3741",

"html_url": "https://github.com/huggingface/datasets/pull/3741",

"diff_url": "https://github.com/huggingface/datasets/pull/3741.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3741.patch",

"merged_at": null

} | true |

1,140,720,739 | 3,740 | Support streaming for pubmed | closed | [

"@albertvillanova just FYI, since you were so helpful with the previous pubmed issue :) ",

"IIRC streaming from FTP is not fully tested yet, so I'm fine with switching to HTTPS for now, as long as the download speed/availability is great",

"@albertvillanova Thanks for pointing me to the `ET` module replacement.... | 2022-02-17T00:18:22 | 2022-02-18T14:42:13 | 2022-02-18T14:42:13 | This PR makes some minor changes to the `pubmed` dataset to allow for `streaming=True`. Fixes #3739.

Basically, I followed the C4 dataset which works in streaming mode as an example, and made the following changes:

* Change URL prefix from `ftp://` to `https://`

* Explicilty `open` the filename and pass the XML ... | abhi-mosaic | https://github.com/huggingface/datasets/pull/3740 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3740",

"html_url": "https://github.com/huggingface/datasets/pull/3740",

"diff_url": "https://github.com/huggingface/datasets/pull/3740.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3740.patch",

"merged_at": "2022-02-18T14:42... | true |

1,140,329,189 | 3,739 | Pubmed dataset does not work in streaming mode | closed | [

"Thanks for reporting, @abhi-mosaic (related to #3655).\r\n\r\nPlease note that `xml.etree.ElementTree.parse` already supports streaming:\r\n- #3476\r\n\r\nNo need to refactor to use `open`/`xopen`. Is is enough with importing the package `as ET` (instead of `as etree`)."

] | 2022-02-16T17:13:37 | 2022-02-18T14:42:13 | 2022-02-18T14:42:13 | ## Describe the bug

Trying to use the `pubmed` dataset with `streaming=True` fails.

## Steps to reproduce the bug

```python

import datasets

pubmed_train = datasets.load_dataset('pubmed', split='train', streaming=True)

print (next(iter(pubmed_train)))

```

## Expected results

I would expect to see the first ... | abhi-mosaic | https://github.com/huggingface/datasets/issues/3739 | null | false |

1,140,164,253 | 3,738 | For data-only datasets, streaming and non-streaming don't behave the same | open | [

"Note that we might change the heuristic and create a different config per file, at least in that case.",

"Hi @severo, thanks for reporting.\r\n\r\nYes, this happens because when non-streaming, a cast of all data is done in order to \"concatenate\" it all into a single dataset (thus the error), while this casting... | 2022-02-16T15:20:57 | 2022-02-21T14:24:55 | null | See https://huggingface.co/datasets/huggingface/transformers-metadata: it only contains two JSON files.

In streaming mode, the files are concatenated, and thus the rows might be dictionaries with different keys:

```python

import datasets as ds

iterable_dataset = ds.load_dataset("huggingface/transformers-metadat... | severo | https://github.com/huggingface/datasets/issues/3738 | null | false |

1,140,148,050 | 3,737 | Make RedCaps streamable | closed | [] | 2022-02-16T15:12:23 | 2022-02-16T15:28:38 | 2022-02-16T15:28:37 | Make RedCaps streamable.

@lhoestq Using `data/redcaps_v1.0_annotations.zip` as a download URL gives an error locally when running `datasets-cli test` (will investigate this another time) | mariosasko | https://github.com/huggingface/datasets/pull/3737 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3737",

"html_url": "https://github.com/huggingface/datasets/pull/3737",

"diff_url": "https://github.com/huggingface/datasets/pull/3737.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3737.patch",

"merged_at": "2022-02-16T15:28... | true |

1,140,134,483 | 3,736 | Local paths in common voice | closed | [

"I just changed to `dl_manager.is_streaming` rather than an additional parameter `streaming` that has to be handled by the DatasetBuilder class - this way the streaming logic doesn't interfere with the base builder's code.\r\n\r\nI think it's better this way, but let me know if you preferred the previous way and I ... | 2022-02-16T15:01:29 | 2022-09-21T14:58:38 | 2022-02-22T09:13:43 | Continuation of https://github.com/huggingface/datasets/pull/3664:

- pass the `streaming` parameter to _split_generator

- update @anton-l's code to use this parameter for `common_voice`

- add a comment to explain why we use `download_and_extract` in non-streaming and `iter_archive` in streaming

Now the `common_... | lhoestq | https://github.com/huggingface/datasets/pull/3736 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3736",

"html_url": "https://github.com/huggingface/datasets/pull/3736",

"diff_url": "https://github.com/huggingface/datasets/pull/3736.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3736.patch",

"merged_at": "2022-02-22T09:13... | true |

1,140,087,891 | 3,735 | Performance of `datasets` at scale | open | [

"> using command line git-lfs - [...] 300MB/s!\r\n\r\nwhich server location did you upload from?",

"From GCP region `us-central1-a`.",

"The most surprising part to me is the saving time. Wondering if it could be due to compression (`ParquetWriter` uses SNAPPY compression by default; it can be turned off with `... | 2022-02-16T14:23:32 | 2024-06-27T01:17:48 | null | # Performance of `datasets` at 1TB scale

## What is this?

During the processing of a large dataset I monitored the performance of the `datasets` library to see if there are any bottlenecks. The insights of this analysis could guide the decision making to improve the performance of the library.

## Dataset

The da... | lvwerra | https://github.com/huggingface/datasets/issues/3735 | null | false |

1,140,050,336 | 3,734 | Fix bugs in NewsQA dataset | closed | [] | 2022-02-16T13:51:28 | 2022-02-17T07:54:26 | 2022-02-17T07:54:25 | Fix #3733. | albertvillanova | https://github.com/huggingface/datasets/pull/3734 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3734",

"html_url": "https://github.com/huggingface/datasets/pull/3734",

"diff_url": "https://github.com/huggingface/datasets/pull/3734.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3734.patch",

"merged_at": "2022-02-17T07:54... | true |

1,140,011,378 | 3,733 | Bugs in NewsQA dataset | closed | [] | 2022-02-16T13:17:37 | 2022-02-17T07:54:25 | 2022-02-17T07:54:25 | ## Describe the bug

NewsQA dataset has the following bugs:

- the field `validated_answers` is an exact copy of the field `answers` but with the addition of `'count': [0]` to each dict

- the field `badQuestion` does not appear in `answers` nor `validated_answers`

## Steps to reproduce the bug

By inspecting the da... | albertvillanova | https://github.com/huggingface/datasets/issues/3733 | null | false |

1,140,004,022 | 3,732 | Support streaming in size estimation function in `push_to_hub` | closed | [

"would this allow to include the size in the dataset info without downloading the files? related to https://github.com/huggingface/datasets/pull/3670",

"@severo I don't think so. We could use this to get `info.download_checksums[\"num_bytes\"]`, but we must process the files to get the rest of the size info. "

] | 2022-02-16T13:10:48 | 2022-02-21T18:18:45 | 2022-02-21T18:18:44 | This PR adds the streamable version of `os.path.getsize` (`fsspec` can return `None`, so we fall back to `fs.open` to make it more robust) to account for possible streamable paths in the nested `extra_nbytes_visitor` function inside `push_to_hub`. | mariosasko | https://github.com/huggingface/datasets/pull/3732 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3732",

"html_url": "https://github.com/huggingface/datasets/pull/3732",

"diff_url": "https://github.com/huggingface/datasets/pull/3732.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3732.patch",

"merged_at": "2022-02-21T18:18... | true |

1,139,626,362 | 3,731 | Fix Multi-News dataset metadata and card | closed | [] | 2022-02-16T07:14:57 | 2022-02-16T08:48:47 | 2022-02-16T08:48:47 | Fix #3730. | albertvillanova | https://github.com/huggingface/datasets/pull/3731 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3731",

"html_url": "https://github.com/huggingface/datasets/pull/3731",

"diff_url": "https://github.com/huggingface/datasets/pull/3731.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3731.patch",

"merged_at": "2022-02-16T08:48... | true |

1,139,545,613 | 3,730 | Checksum Error when loading multi-news dataset | closed | [

"Thanks for reporting @byw2.\r\nWe are fixing it.\r\nIn the meantime, you can load the dataset by passing `ignore_verifications=True`:\r\n ```python\r\ndataset = load_dataset(\"multi_news\", ignore_verifications=True)"

] | 2022-02-16T05:11:08 | 2022-02-16T20:05:06 | 2022-02-16T08:48:46 | ## Describe the bug

When using the load_dataset function from datasets module to load the Multi-News dataset, does not load the dataset but throws Checksum Error instead.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("multi_news")

```

## Expected results

... | byw2 | https://github.com/huggingface/datasets/issues/3730 | null | false |

1,139,398,442 | 3,729 | Wrong number of examples when loading a text dataset | closed | [

"Hi @kg-nlp, thanks for reporting.\r\n\r\nThat is weird... I guess we would need some sample data file where this behavior appears to reproduce the bug for further investigation... ",

"ok, I found the reason why that two results are not same.\r\nthere is /u2029 in the text, the datasets will split sentence accord... | 2022-02-16T01:13:31 | 2022-03-15T16:16:09 | 2022-03-15T16:16:09 | ## Describe the bug

when I use load_dataset to read a txt file I find that the number of the samples is incorrect

## Steps to reproduce the bug

```

fr = open('train.txt','r',encoding='utf-8').readlines()

print(len(fr)) # 1199637

datasets = load_dataset('text', data_files={'train': ['train.txt']}, streaming... | kg-nlp | https://github.com/huggingface/datasets/issues/3729 | null | false |

1,139,303,614 | 3,728 | VoxPopuli | closed | [

"duplicate of https://github.com/huggingface/datasets/issues/2300"

] | 2022-02-15T23:04:55 | 2022-02-16T18:49:12 | 2022-02-16T18:49:12 | ## Adding a Dataset

- **Name:** VoxPopuli

- **Description:** A Large-Scale Multilingual Speech Corpus

- **Paper:** https://arxiv.org/pdf/2101.00390.pdf

- **Data:** https://github.com/facebookresearch/voxpopuli

- **Motivation:** one of the largest (if not the largest) multilingual speech corpus: 400K hours of multi... | VictorSanh | https://github.com/huggingface/datasets/issues/3728 | null | false |

1,138,979,732 | 3,727 | Patch all module attributes in its namespace | closed | [] | 2022-02-15T17:12:27 | 2022-02-17T17:06:18 | 2022-02-17T17:06:17 | When patching module attributes, only those defined in its `__all__` variable were considered by default (only falling back to `__dict__` if `__all__` was None).

However those are only a subset of all the module attributes in its namespace (`__dict__` variable).

This PR fixes the problem of modules that have non-... | albertvillanova | https://github.com/huggingface/datasets/pull/3727 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3727",

"html_url": "https://github.com/huggingface/datasets/pull/3727",

"diff_url": "https://github.com/huggingface/datasets/pull/3727.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3727.patch",

"merged_at": "2022-02-17T17:06... | true |

1,138,870,362 | 3,726 | Use config pandas version in CSV dataset builder | closed | [] | 2022-02-15T15:47:49 | 2022-02-15T16:55:45 | 2022-02-15T16:55:44 | Fix #3724. | albertvillanova | https://github.com/huggingface/datasets/pull/3726 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3726",

"html_url": "https://github.com/huggingface/datasets/pull/3726",

"diff_url": "https://github.com/huggingface/datasets/pull/3726.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3726.patch",

"merged_at": "2022-02-15T16:55... | true |

1,138,835,625 | 3,725 | Pin pandas to avoid bug in streaming mode | closed | [] | 2022-02-15T15:21:00 | 2022-02-15T15:52:38 | 2022-02-15T15:52:37 | Temporarily pin pandas version to avoid bug in streaming mode (patching no longer works).

Related to #3724. | albertvillanova | https://github.com/huggingface/datasets/pull/3725 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3725",

"html_url": "https://github.com/huggingface/datasets/pull/3725",

"diff_url": "https://github.com/huggingface/datasets/pull/3725.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3725.patch",

"merged_at": "2022-02-15T15:52... | true |

1,138,827,681 | 3,724 | Bug while streaming CSV dataset with pandas 1.4 | closed | [] | 2022-02-15T15:16:19 | 2022-02-15T16:55:44 | 2022-02-15T16:55:44 | ## Describe the bug

If we upgrade to pandas `1.4`, the patching of the pandas module is no longer working

```

AttributeError: '_PatchedModuleObj' object has no attribute '__version__'

```

## Steps to reproduce the bug

```

pip install pandas==1.4

```

```python

from datasets import load_dataset

ds = load_dat... | albertvillanova | https://github.com/huggingface/datasets/issues/3724 | null | false |

1,138,789,493 | 3,723 | Fix flatten of complex feature types | closed | [

"Apparently the merge brought back some tests that use `flatten_()` that we removed recently",

"_The documentation is not available anymore as the PR was closed or merged._"

] | 2022-02-15T14:45:33 | 2022-03-18T17:32:26 | 2022-03-18T17:28:14 | Fix `flatten` for the following feature types: Image/Audio, Translation, and TranslationVariableLanguages.

Inspired by `cast`/`table_cast`, I've introduced a `table_flatten` function to handle the Image/Audio types.

CC: @SBrandeis

Fix #3686.

| mariosasko | https://github.com/huggingface/datasets/pull/3723 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3723",

"html_url": "https://github.com/huggingface/datasets/pull/3723",

"diff_url": "https://github.com/huggingface/datasets/pull/3723.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3723.patch",

"merged_at": "2022-03-18T17:28... | true |

1,138,770,211 | 3,722 | added electricity load diagram dataset | closed | [] | 2022-02-15T14:29:29 | 2022-02-16T18:53:21 | 2022-02-16T18:48:07 | Initial Electricity Load Diagram time series dataset. | kashif | https://github.com/huggingface/datasets/pull/3722 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3722",

"html_url": "https://github.com/huggingface/datasets/pull/3722",

"diff_url": "https://github.com/huggingface/datasets/pull/3722.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3722.patch",

"merged_at": "2022-02-16T18:48... | true |

1,137,617,108 | 3,721 | Multi-GPU support for `FaissIndex` | closed | [

"Any love?",

"Hi, any update?",

"@albertvillanova Sorry for bothering you again, quick follow up: is there anything else you want me to add / modify?",

"Hi @rentruewang , we updated the documentation on `master`, could you merge `master` into your branch please ?",

"@lhoestq I've merge `huggingface/datasets... | 2022-02-14T17:26:51 | 2022-03-07T16:28:57 | 2022-03-07T16:28:56 | Per #3716 , current implementation does not take into consideration that `faiss` can run on multiple GPUs.

In this commit, I provided multi-GPU support for `FaissIndex` by modifying the device management in `IndexableMixin.add_faiss_index` and `FaissIndex.load`.

Now users are able to pass in

1. a positive intege... | rentruewang | https://github.com/huggingface/datasets/pull/3721 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3721",

"html_url": "https://github.com/huggingface/datasets/pull/3721",

"diff_url": "https://github.com/huggingface/datasets/pull/3721.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3721.patch",

"merged_at": "2022-03-07T16:28... | true |

1,137,537,080 | 3,720 | Builder Configuration Update Required on Common Voice Dataset | closed | [

"Hi @aasem, thanks for reporting.\r\n\r\nPlease note that currently Commom Voice is hosted on our Hub as a community dataset by the Mozilla Foundation. See all Common Voice versions here: https://huggingface.co/mozilla-foundation\r\n\r\nMaybe we should add an explaining note in our \"legacy\" Common Voice canonical... | 2022-02-14T16:21:41 | 2024-04-28T18:03:08 | 2024-04-28T18:03:08 | Missing language in Common Voice dataset

**Link:** https://huggingface.co/datasets/common_voice

I tried to call the Urdu dataset using `load_dataset("common_voice", "ur", split="train+validation")` but couldn't due to builder configuration not found. I checked the source file here for the languages support:

ht... | aasem | https://github.com/huggingface/datasets/issues/3720 | null | false |

1,137,237,622 | 3,719 | Check if indices values in `Dataset.select` are within bounds | closed | [] | 2022-02-14T12:31:41 | 2022-02-14T19:19:22 | 2022-02-14T19:19:22 | Fix #3707

Instead of reusing `_check_valid_index_key` from `datasets.formatting`, I defined a new function to provide a more meaningful error message.

| mariosasko | https://github.com/huggingface/datasets/pull/3719 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3719",

"html_url": "https://github.com/huggingface/datasets/pull/3719",

"diff_url": "https://github.com/huggingface/datasets/pull/3719.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3719.patch",

"merged_at": "2022-02-14T19:19... | true |

1,137,196,388 | 3,718 | Fix Evidence Infer Treatment dataset | closed | [] | 2022-02-14T11:58:07 | 2022-02-14T13:21:45 | 2022-02-14T13:21:44 | This PR:

- fixes a bug in the script, by removing an unnamed column with the row index: fix KeyError

- fix the metadata JSON, by adding both configurations (1.1 and 2.0): fix ExpectedMoreDownloadedFiles

- updates the dataset card

Fix #3515. | albertvillanova | https://github.com/huggingface/datasets/pull/3718 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3718",

"html_url": "https://github.com/huggingface/datasets/pull/3718",

"diff_url": "https://github.com/huggingface/datasets/pull/3718.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3718.patch",

"merged_at": "2022-02-14T13:21... | true |

1,137,183,015 | 3,717 | wrong condition in `Features ClassLabel encode_example` | closed | [

"Hi @Tudyx, \r\n\r\nPlease note that in Python, the boolean NOT operator (`not`) has lower precedence than comparison operators (`<=`, `<`), thus the expression you mention is equivalent to:\r\n```python\r\n not (-1 <= example_data < self.num_classes)\r\n```\r\n\r\nAlso note that as expected, the exception is raise... | 2022-02-14T11:44:35 | 2022-02-14T15:09:36 | 2022-02-14T15:07:43 | ## Describe the bug

The `encode_example` function in *features.py* seems to have a wrong condition.

```python

if not -1 <= example_data < self.num_classes:

raise ValueError(f"Class label {example_data:d} greater than configured num_classes {self.num_classes}")

```

## Expected results

The `not - 1` co... | Tudyx | https://github.com/huggingface/datasets/issues/3717 | null | false |

1,136,831,092 | 3,716 | `FaissIndex` to support multiple GPU and `custom_index` | closed | [

"Hi @rentruewang, thansk for reporting and for your PR!!! We should definitely support this. ",

"@albertvillanova Great! :)"

] | 2022-02-14T06:21:43 | 2022-03-07T16:28:56 | 2022-03-07T16:28:56 | **Is your feature request related to a problem? Please describe.**

Currently, because `device` is of the type `int | None`, to leverage `faiss-gpu`'s multi-gpu support, you need to create a `custom_index`. However, if using a `custom_index` created by e.g. `faiss.index_cpu_to_all_gpus`, then `FaissIndex.save` does not ... | rentruewang | https://github.com/huggingface/datasets/issues/3716 | null | false |

1,136,107,879 | 3,715 | Fix bugs in msr_sqa dataset | closed | [

"It shows below when I run test:\r\n\r\nFAILED tests/test_dataset_common.py::LocalDatasetTest::test_load_dataset_all_configs_msr_sqa - ValueError: Unknown split \"validation\". Should be one of ['train', 'test'].\r\n\r\nIt make no sense for me😂. \r\n",

"@albertvillanova Does this PR has some additional fixes com... | 2022-02-13T16:37:30 | 2022-10-03T09:10:02 | 2022-10-03T09:08:06 | The last version has many problems,

1) Errors in table load-in. Split by a single comma instead of using pandas is wrong.

2) id reduplicated in _generate_examples function.

3) Missing information of history questions which make it hard to use.

I fix it refer to https://github.com/HKUNLP/UnifiedSKG. And we test ... | Timothyxxx | https://github.com/huggingface/datasets/pull/3715 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3715",

"html_url": "https://github.com/huggingface/datasets/pull/3715",

"diff_url": "https://github.com/huggingface/datasets/pull/3715.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3715.patch",

"merged_at": "2022-10-03T09:08... | true |

1,136,105,530 | 3,714 | tatoeba_mt: File not found error and key error | closed | [

"Looks like I solved my problems ..."

] | 2022-02-13T16:35:45 | 2022-02-13T20:44:04 | 2022-02-13T20:44:04 | ## Dataset viewer issue for 'tatoeba_mt'

**Link:** https://huggingface.co/datasets/Helsinki-NLP/tatoeba_mt

My data loader script does not seem to work.

The files are part of the local repository but cannot be found. An example where it should work is the subset for "afr-eng".

Another problem is that I do not ... | jorgtied | https://github.com/huggingface/datasets/issues/3714 | null | false |

1,135,692,572 | 3,713 | Rm sphinx doc | closed | [

"Thanks for pushing this :)\r\nOne minor comment regarding the PR itself - I noticed that some changes are coming from the upstream master, this might be due to a rebase. Would be nice if this PR doesn't include them for readabily, feel free to open a new one if necessary",

"Closing in favour https://github.com/h... | 2022-02-13T11:26:31 | 2022-02-17T10:18:46 | 2022-02-17T10:12:09 | Checklist

- [x] Update circle ci yaml

- [x] Delete sphinx static & python files in docs dir

- [x] Update readme in docs dir

- [ ] Update docs config in setup.py | mishig25 | https://github.com/huggingface/datasets/pull/3713 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3713",

"html_url": "https://github.com/huggingface/datasets/pull/3713",

"diff_url": "https://github.com/huggingface/datasets/pull/3713.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3713.patch",

"merged_at": null

} | true |

1,134,252,505 | 3,712 | Fix the error of msr_sqa dataset | closed | [] | 2022-02-12T16:27:54 | 2022-02-13T11:21:05 | 2022-02-13T11:21:05 | Fix the error of _load_table_data function in msr_sqa dataset, it is wrong to use comma to split each row. | Timothyxxx | https://github.com/huggingface/datasets/pull/3712 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3712",

"html_url": "https://github.com/huggingface/datasets/pull/3712",

"diff_url": "https://github.com/huggingface/datasets/pull/3712.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3712.patch",

"merged_at": null

} | true |

1,134,050,545 | 3,711 | Fix the error of _load_table_data function in msr_sqa dataset | closed | [] | 2022-02-12T13:20:53 | 2022-02-12T13:30:43 | 2022-02-12T13:30:43 | The _load_table_data function from the last version is wrong, it is wrong to use comma to split each row. | Timothyxxx | https://github.com/huggingface/datasets/pull/3711 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3711",

"html_url": "https://github.com/huggingface/datasets/pull/3711",

"diff_url": "https://github.com/huggingface/datasets/pull/3711.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3711.patch",

"merged_at": null

} | true |

1,133,955,393 | 3,710 | Fix CI code quality issue | closed | [] | 2022-02-12T12:05:39 | 2022-02-12T12:58:05 | 2022-02-12T12:58:04 | Fix CI code quality issue introduced by #3695. | albertvillanova | https://github.com/huggingface/datasets/pull/3710 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3710",

"html_url": "https://github.com/huggingface/datasets/pull/3710",

"diff_url": "https://github.com/huggingface/datasets/pull/3710.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3710.patch",

"merged_at": "2022-02-12T12:58... | true |

1,132,997,904 | 3,709 | Set base path to hub url for canonical datasets | closed | [

"If we agree to have data files in a dedicated directory \"data/\" then we should be fine. You're right we should not try to edit a dataset script from the repository directly, but from github, in order to avoid conflicts"

] | 2022-02-11T19:23:20 | 2022-02-16T14:02:28 | 2022-02-16T14:02:27 | This should allow canonical datasets to use relative paths to download data files from the Hub

cc @polinaeterna this will be useful if we have audio datasets that are canonical and for which you'd like to host data files | lhoestq | https://github.com/huggingface/datasets/pull/3709 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3709",

"html_url": "https://github.com/huggingface/datasets/pull/3709",

"diff_url": "https://github.com/huggingface/datasets/pull/3709.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3709.patch",

"merged_at": "2022-02-16T14:02... | true |

1,132,968,402 | 3,708 | Loading JSON gets stuck with many workers/threads | open | [

"Hi ! Note that it does `block_size *= 2` until `block_size > len(batch)`, so it doesn't loop indefinitely. What do you mean by \"get stuck indefinitely\" then ? Is this the actual call to `paj.read_json` that hangs ?\r\n\r\n> increasing the `chunksize` argument decreases the chance of getting stuck\r\n\r\nCould yo... | 2022-02-11T18:50:48 | 2023-06-16T11:24:12 | null | ## Describe the bug

Loading a JSON dataset with `load_dataset` can get stuck when running on a machine with many CPUs. This is especially an issue when loading a large dataset on a large machine.

## Steps to reproduce the bug

I originally created the following script to reproduce the issue:

```python

from dat... | lvwerra | https://github.com/huggingface/datasets/issues/3708 | null | false |

1,132,741,903 | 3,707 | `.select`: unexpected behavior with `indices` | closed | [

"Hi! Currently, we compute the final index as `index % len(dset)`. I agree this behavior is somewhat unexpected and that it would be more appropriate to raise an error instead (this is what `df.iloc` in Pandas does, for instance).\r\n\r\n@albertvillanova @lhoestq wdyt?",

"I agree. I think `index % len(dset)` was ... | 2022-02-11T15:20:01 | 2022-02-14T19:19:21 | 2022-02-14T19:19:21 | ## Describe the bug

The `.select` method will not throw when sending `indices` bigger than the dataset length; `indices` will be wrapped instead. This behavior is not documented anywhere, and is not intuitive.

## Steps to reproduce the bug

```python

from datasets import Dataset

ds = Dataset.from_dict({"text": [... | gabegma | https://github.com/huggingface/datasets/issues/3707 | null | false |

1,132,218,874 | 3,706 | Unable to load dataset 'big_patent' | closed | [

"Hi @ankitk2109,\r\n\r\nHave you tried passing the split name with the keyword `split=`? See e.g. an example in our Quick Start docs: https://huggingface.co/docs/datasets/quickstart.html#load-the-dataset-and-model\r\n```python\r\n ds = load_dataset(\"big_patent\", \"d\", split=\"validation\")",

"Hi @albertvillano... | 2022-02-11T09:48:34 | 2022-02-14T15:26:03 | 2022-02-14T15:26:03 | ## Describe the bug

Unable to load the "big_patent" dataset

## Steps to reproduce the bug

```python

load_dataset('big_patent', 'd', 'validation')

```

## Expected results

Download big_patents' validation split from the 'd' subset

## Getting an error saying:

{FileNotFoundError}Local file ..\huggingface\dat... | ankitk2109 | https://github.com/huggingface/datasets/issues/3706 | null | false |

1,132,053,226 | 3,705 | Raise informative error when loading a save_to_disk dataset | closed | [] | 2022-02-11T08:21:03 | 2022-02-11T22:56:40 | 2022-02-11T22:56:39 | People recurrently report error when trying to load a dataset (using `load_dataset`) that was previously saved using `save_to_disk`.

This PR raises an informative error message telling them they should use `load_from_disk` instead.

Close #3700. | albertvillanova | https://github.com/huggingface/datasets/pull/3705 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3705",

"html_url": "https://github.com/huggingface/datasets/pull/3705",

"diff_url": "https://github.com/huggingface/datasets/pull/3705.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3705.patch",

"merged_at": "2022-02-11T22:56... | true |

1,132,042,631 | 3,704 | OSCAR-2109 datasets are misaligned and truncated | closed | [

"Hi @adrianeboyd, thanks for reporting.\r\n\r\nThere is indeed a bug in that community dataset:\r\nLine:\r\n```python\r\nmetadata_and_text_files = list(zip(metadata_files, text_files))\r\n``` \r\nshould be replaced with\r\n```python\r\nmetadata_and_text_files = list(zip(sorted(metadata_files), sorted(text_files)))\... | 2022-02-11T08:14:59 | 2022-03-17T18:01:04 | 2022-03-16T16:21:28 | ## Describe the bug

The `oscar-corpus/OSCAR-2109` data appears to be misaligned and truncated by the dataset builder for subsets that contain more than one part and for cases where the texts contain non-unix newlines.

## Steps to reproduce the bug

A few examples, although I'm not sure how deterministic the par... | adrianeboyd | https://github.com/huggingface/datasets/issues/3704 | null | false |

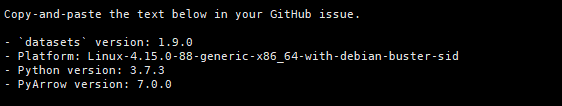

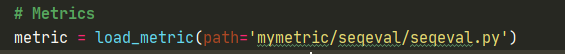

1,131,882,772 | 3,703 | ImportError: To be able to use this metric, you need to install the following dependencies['seqeval'] using 'pip install seqeval' for instance' | closed | [

"\r\nMy datasets version",

"\r\n",

"Hi! Some of our metrics require additional dependencies to w... | 2022-02-11T06:38:42 | 2023-07-11T09:31:59 | 2023-07-11T09:31:59 | hi :

I want to use the seqeval indicator because of direct load_ When metric ('seqeval '), it will prompt that the network connection fails. So I downloaded the seqeval Py to load locally. Loading code: metric = load_ metric(path='mymetric/seqeval/seqeval.py')

But tips:

Traceback (most recent call last):

File... | zhangyifei1 | https://github.com/huggingface/datasets/issues/3703 | null | false |

1,130,666,707 | 3,702 | Update data URL of lm1b dataset | closed | [

"Hi ! I'm getting some 503 from both the http and https addresses. Do you think we could host this data somewhere else ? (please check if there is a license and if it allows redistribution)",

"Both HTTP and HTTPS links are working now.\r\n\r\nWe are closing this PR."

] | 2022-02-10T18:46:30 | 2022-09-23T11:52:39 | 2022-09-23T11:52:39 | The http address doesn't work anymore | yazdanbakhsh | https://github.com/huggingface/datasets/pull/3702 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3702",

"html_url": "https://github.com/huggingface/datasets/pull/3702",

"diff_url": "https://github.com/huggingface/datasets/pull/3702.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3702.patch",

"merged_at": null

} | true |

1,130,498,738 | 3,701 | Pin ElasticSearch | closed | [] | 2022-02-10T17:15:26 | 2022-02-10T17:31:13 | 2022-02-10T17:31:12 | Until we manage to support ES 8.0, I'm setting the version to `<8.0.0`

Currently we're getting this error on 8.0:

```python

ValueError: Either 'hosts' or 'cloud_id' must be specified

```

When instantiating a `Elasticsearch()` object | lhoestq | https://github.com/huggingface/datasets/pull/3701 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3701",

"html_url": "https://github.com/huggingface/datasets/pull/3701",

"diff_url": "https://github.com/huggingface/datasets/pull/3701.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3701.patch",

"merged_at": "2022-02-10T17:31... | true |

1,130,252,496 | 3,700 | Unable to load a dataset | closed | [

"Hi! `load_dataset` is intended to be used to load a canonical dataset (`wikipedia`), a packaged dataset (`csv`, `json`, ...) or a dataset hosted on the Hub. For local datasets saved with `save_to_disk(\"path/to/dataset\")`, use `load_from_disk(\"path/to/dataset\")`.",

"Maybe we should raise an informative error ... | 2022-02-10T15:05:53 | 2024-07-04T08:39:23 | 2022-02-11T22:56:39 | ## Describe the bug

Unable to load a dataset from Huggingface that I have just saved.

## Steps to reproduce the bug

On Google colab

`! pip install datasets `

`from datasets import load_dataset`

`my_path = "wiki_dataset"`

`dataset = load_dataset('wikipedia', "20200501.fr")`

`dataset.save_to_disk(my_path)`

`... | PaulchauvinAI | https://github.com/huggingface/datasets/issues/3700 | null | false |

1,130,200,593 | 3,699 | Add dev-only config to Natural Questions dataset | closed | [

"Great thanks ! I think we can fix the CI by copying the NQ folder on gcs to 0.0.3. Does that sound good ?",

"I've copied the 0.0.2 folder content to 0.0.3, as suggested.\r\n\r\nI'm updating the dataset card..."

] | 2022-02-10T14:42:24 | 2022-02-11T09:50:22 | 2022-02-11T09:50:21 | As suggested by @lhoestq and @thomwolf, a new config has been added to Natural Questions dataset, so that only dev split can be downloaded.

Fix #413. | albertvillanova | https://github.com/huggingface/datasets/pull/3699 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3699",

"html_url": "https://github.com/huggingface/datasets/pull/3699",

"diff_url": "https://github.com/huggingface/datasets/pull/3699.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3699.patch",

"merged_at": "2022-02-11T09:50... | true |

1,129,864,282 | 3,698 | Add finetune-data CodeFill | closed | [

"Thanks for your contribution, @rgismondi. Are you still interested in adding this dataset?\r\n\r\nWe are removing the dataset scripts from this GitHub repo and moving them to the Hugging Face Hub: https://huggingface.co/datasets\r\n\r\nWe would suggest you create this dataset there. Please, feel free to tell us if... | 2022-02-10T11:12:51 | 2022-10-03T09:36:18 | 2022-10-03T09:36:18 | null | rgismondi | https://github.com/huggingface/datasets/pull/3698 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3698",

"html_url": "https://github.com/huggingface/datasets/pull/3698",

"diff_url": "https://github.com/huggingface/datasets/pull/3698.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3698.patch",

"merged_at": null

} | true |

1,129,795,724 | 3,697 | Add code-fill datasets for pretraining/finetuning/evaluating | closed | [

"Hi ! Thanks for adding this dataset :)\r\n\r\nIt looks like your PR contains many changes in files that are unrelated to your changes, I think it might come from running `make style` with an outdated version of `black`. Could you try opening a new PR that only contains your additions ? (or force push to this PR)"

... | 2022-02-10T10:31:48 | 2022-07-06T15:19:58 | 2022-07-06T15:19:58 | null | rgismondi | https://github.com/huggingface/datasets/pull/3697 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3697",

"html_url": "https://github.com/huggingface/datasets/pull/3697",

"diff_url": "https://github.com/huggingface/datasets/pull/3697.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3697.patch",

"merged_at": null

} | true |

1,129,764,534 | 3,696 | Force unique keys in newsqa dataset | closed | [] | 2022-02-10T10:09:19 | 2022-02-14T08:37:20 | 2022-02-14T08:37:19 | Currently, it may raise `DuplicatedKeysError`.

Fix #3630. | albertvillanova | https://github.com/huggingface/datasets/pull/3696 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3696",

"html_url": "https://github.com/huggingface/datasets/pull/3696",

"diff_url": "https://github.com/huggingface/datasets/pull/3696.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3696.patch",

"merged_at": "2022-02-14T08:37... | true |

1,129,730,148 | 3,695 | Fix ClassLabel to/from dict when passed names_file | closed | [] | 2022-02-10T09:47:10 | 2022-02-11T23:02:32 | 2022-02-11T23:02:31 | Currently, `names_file` is a field of the data class `ClassLabel`, thus appearing when transforming it to dict (when saving infos). Afterwards, when trying to read it from infos, it conflicts with the other field `names`.

This PR, removes `names_file` as a field of the data class `ClassLabel`.

- it is only used at ... | albertvillanova | https://github.com/huggingface/datasets/pull/3695 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3695",

"html_url": "https://github.com/huggingface/datasets/pull/3695",

"diff_url": "https://github.com/huggingface/datasets/pull/3695.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3695.patch",

"merged_at": "2022-02-11T23:02... | true |

1,128,554,365 | 3,693 | Standardize to `Example::` | closed | [

"Closing because https://github.com/huggingface/datasets/pull/3690/commits/ee0e0935d6105c1390b0e14a7622fbaad3044dbb"

] | 2022-02-09T13:37:13 | 2022-02-17T10:20:55 | 2022-02-17T10:20:52 | null | mishig25 | https://github.com/huggingface/datasets/pull/3693 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3693",

"html_url": "https://github.com/huggingface/datasets/pull/3693",

"diff_url": "https://github.com/huggingface/datasets/pull/3693.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3693.patch",

"merged_at": null

} | true |

1,128,320,004 | 3,692 | Update data URL in pubmed dataset | closed | [

"- I updated the previous dummy data: I just had to rename the file and its directory\r\n - the dummy data zip contains only a single file: `pubmed22n0001.xml.gz`\r\n\r\nThen I discover it fails: https://app.circleci.com/pipelines/github/huggingface/datasets/9800/workflows/173a4433-8feb-4fc6-ab9e-59762084e3e1/jobs... | 2022-02-09T10:06:21 | 2022-02-14T14:15:42 | 2022-02-14T14:15:41 | Fix #3655. | albertvillanova | https://github.com/huggingface/datasets/pull/3692 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3692",

"html_url": "https://github.com/huggingface/datasets/pull/3692",

"diff_url": "https://github.com/huggingface/datasets/pull/3692.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3692.patch",

"merged_at": "2022-02-14T14:15... | true |

1,127,629,306 | 3,691 | Upgrade black to version ~=22.0 | closed | [] | 2022-02-08T18:45:19 | 2022-02-08T19:56:40 | 2022-02-08T19:56:39 | Upgrades the `datasets` library quality tool `black` to use the first stable release of `black`, version 22.0. | LysandreJik | https://github.com/huggingface/datasets/pull/3691 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3691",

"html_url": "https://github.com/huggingface/datasets/pull/3691",

"diff_url": "https://github.com/huggingface/datasets/pull/3691.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3691.patch",

"merged_at": "2022-02-08T19:56... | true |

1,127,493,538 | 3,690 | Update docs to new frontend/UI | closed | [

"We can have the docstrings of the properties that are missing docstrings (from discussion [here](https://github.com/huggingface/doc-builder/pull/96)) here by using your new `inject_arrow_table_documentation` onthem as well ?",

"@sgugger & @lhoestq could you help me with what should the `docs` section in setup.py... | 2022-02-08T16:38:09 | 2022-03-03T20:04:21 | 2022-03-03T20:04:20 | ### TLDR: Update `datasets` `docs` to the new syntax (markdown and mdx files) & frontend (as how it looks on [hf.co/transformers](https://huggingface.co/docs/transformers/index))

| Light mode | Dark mode ... | mishig25 | https://github.com/huggingface/datasets/pull/3690 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3690",

"html_url": "https://github.com/huggingface/datasets/pull/3690",

"diff_url": "https://github.com/huggingface/datasets/pull/3690.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3690.patch",

"merged_at": "2022-03-03T20:04... | true |

1,127,422,478 | 3,689 | Fix streaming for servers not supporting HTTP range requests | closed | [

"Does it mean that huge files might end up being downloaded? It would go against the purpose of streaming, I think. At least, this fallback should be an option that could be disabled",

"Yes, it is against the purpose of streaming, but streaming is not possible if the server does not allow HTTP range requests.\n\n... | 2022-02-08T15:41:05 | 2022-02-10T16:51:25 | 2022-02-10T16:51:25 | Some servers do not support HTTP range requests, whereas this is required to stream some file formats (like ZIP).

~~This PR implements a workaround for those cases, by download the files locally in a temporary directory (cleaned up by the OS once the process is finished).~~

This PR raises custom error explaining ... | albertvillanova | https://github.com/huggingface/datasets/pull/3689 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3689",

"html_url": "https://github.com/huggingface/datasets/pull/3689",

"diff_url": "https://github.com/huggingface/datasets/pull/3689.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3689.patch",

"merged_at": "2022-02-10T16:51... | true |

1,127,218,321 | 3,688 | Pyarrow version error | closed | [

"Hi @Zaker237, thanks for reporting.\r\n\r\nThis is weird: the error you get is only thrown if the installed pyarrow version is less than 3.0.0.\r\n\r\nCould you please check that you install pyarrow in the same Python virtual environment where you installed datasets?\r\n\r\nFrom the Python command line (or termina... | 2022-02-08T12:53:59 | 2022-02-09T06:35:33 | 2022-02-09T06:35:32 | ## Describe the bug

I installed datasets(version 1.17.0, 1.18.0, 1.18.3) but i'm right now nor able to import it because of pyarrow. when i try to import it, i get the following error:

`To use datasets, the module pyarrow>=3.0.0 is required, and the current version of pyarrow doesn't match this condition`.

i tryed w... | Zaker237 | https://github.com/huggingface/datasets/issues/3688 | null | false |

1,127,154,766 | 3,687 | Can't get the text data when calling to_tf_dataset | closed | [

"cc @Rocketknight1 ",

"You are correct that `to_tf_dataset` only handles numerical columns right now, yes, though this is a limitation we might remove in future! The main reason we do this is that our models mostly do not include the tokenizer as a model layer, because it's very difficult to compile some of them ... | 2022-02-08T11:52:10 | 2023-01-19T14:55:18 | 2023-01-19T14:55:18 | I am working with the SST2 dataset, and am using TensorFlow 2.5

I'd like to convert it to a `tf.data.Dataset` by calling the `to_tf_dataset` method.

The following snippet is what I am using to achieve this:

```

from datasets import load_dataset

from transformers import DefaultDataCollator

data_collator = Defa... | phrasenmaeher | https://github.com/huggingface/datasets/issues/3687 | null | false |

1,127,137,290 | 3,686 | `Translation` features cannot be `flatten`ed | closed | [

"Thanks for reporting, @SBrandeis! Some additional feature types that don't behave as expected when flattened: `Audio`, `Image` and `TranslationVariableLanguages`"

] | 2022-02-08T11:33:48 | 2022-03-18T17:28:13 | 2022-03-18T17:28:13 | ## Describe the bug

(`Dataset.flatten`)[https://github.com/huggingface/datasets/blob/master/src/datasets/arrow_dataset.py#L1265] fails for columns with feature (`Translation`)[https://github.com/huggingface/datasets/blob/3edbeb0ec6519b79f1119adc251a1a6b379a2c12/src/datasets/features/translation.py#L8]

## Steps to... | SBrandeis | https://github.com/huggingface/datasets/issues/3686 | null | false |

1,126,240,444 | 3,685 | Add support for `Audio` and `Image` feature in `push_to_hub` | closed | [

"> Cool thanks !\r\n> \r\n> Also cc @patrickvonplaten @anton-l it means that when calling push_to_hub, the audio bytes are embedded in the parquet files (we don't upload the audio files themselves)\r\n\r\nJust to verify quickly the size of the dataset doesn't change in this case no? E.g. if a dataset has say 20GB i... | 2022-02-07T16:47:16 | 2022-02-14T18:14:57 | 2022-02-14T18:04:58 | Add support for the `Audio` and the `Image` feature in `push_to_hub`.

The idea is to remove local path information and store file content under "bytes" in the Arrow table before the push.

My initial approach (https://github.com/huggingface/datasets/commit/34c652afeff9686b6b8bf4e703c84d2205d670aa) was to use a ma... | mariosasko | https://github.com/huggingface/datasets/pull/3685 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3685",

"html_url": "https://github.com/huggingface/datasets/pull/3685",

"diff_url": "https://github.com/huggingface/datasets/pull/3685.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3685.patch",

"merged_at": "2022-02-14T18:04... | true |

1,125,133,664 | 3,684 | [fix]: iwslt2017 download urls | closed | [

"Hi ! Thanks for the fix ! Do you know where this new URL comes from ?\r\n\r\nAlso we try to not use Google Drive if possible, since it has download quota limitations. Do you know if the data is available from another host than Google Drive ?",

"Oh, I found it just by following the link from the [IWSLT2017 homepa... | 2022-02-06T07:56:55 | 2022-09-22T16:20:19 | 2022-09-22T16:20:18 | Fixes #2076. | msarmi9 | https://github.com/huggingface/datasets/pull/3684 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3684",

"html_url": "https://github.com/huggingface/datasets/pull/3684",

"diff_url": "https://github.com/huggingface/datasets/pull/3684.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3684.patch",

"merged_at": null

} | true |

1,124,458,371 | 3,683 | added told-br (brazilian hate speech) dataset | closed | [

"Amazing thank you ! Feel free to regenerate the `dataset_infos.json` to account for the feature type change, and then I think we'll be good to merge :)",

"Great thank you ! merging :)"

] | 2022-02-04T17:44:32 | 2022-02-07T21:14:52 | 2022-02-07T21:14:52 | Hey,